Ratatoskr — Voice to Clipboard, No Cloud Required

A local speech-to-text tool — press a hotkey, speak, paste the transcription. No cloud, no accounts.

Every dictation tool I tried wanted me to sign up, open a browser, or send my audio to someone’s server. I just wanted to press a key, talk, and paste. So I built one that does exactly that.

The idea

Ratatoskr sits in your system tray and does one thing: press a hotkey, speak, get text in your clipboard. Everything runs locally through faster-whisper — the same Whisper model OpenAI released, but optimized with CTranslate2. No audio ever leaves your machine. The only network call is a one-time model download from Hugging Face on first run.

That last part mattered to me. I dictate notes, draft messages, dump half-formed thoughts. None of that should end up on someone else’s server.

How it works

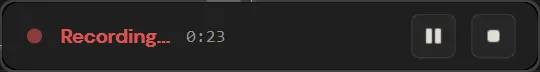

You press Ctrl+Alt+R (or whatever you configure). A small overlay pops up in the bottom-right corner showing that you’re recording, with a timer and pause/stop buttons.

When you stop, the audio goes straight to Whisper. A few seconds later the transcription lands in your clipboard. Paste it wherever you want — email, Slack, your editor, a terminal. If you also want a .txt file saved, there’s a toggle for that.

That’s the entire workflow. No windows to manage, no UI to navigate. The app is invisible until you need it.

Under the hood

The stack is intentionally small:

- PySide6 for the system tray, overlay, and settings dialog

- faster-whisper for transcription (GPU via CUDA when available, CPU fallback)

- sounddevice for microphone capture at 16 kHz mono — the format Whisper expects

- Windows RegisterHotKey API for the global shortcut — works even when the app isn’t focused

The model preloads in a background thread at startup so the first transcription doesn’t stall. Audio is kept in memory as a float32 numpy array and passed directly to Whisper — no temp files in the happy path.

There is one file written to disk: a recovery WAV saved right before transcription starts. If the app crashes mid-transcribe, the next launch picks it up and finishes the job. On success or failure, the file is deleted.

Settings

Right-click the tray icon to open settings. You can change the Whisper model size (from tiny for speed to large-v3 for accuracy), pick a source language or leave it on auto-detect, remap the hotkey, and toggle clipboard/file output.

The small model is the default. It’s a good balance — fast enough for real-time use, accurate enough for most languages. If you have a GPU, the bigger models become practical too.

Vibe-coded, then audited

Like Mamrot, this was a vibe-coding project — I described what I wanted and let Claude build the first version. Before pushing it public, I had both Claude and GPT audit the code against each other: thread safety, license compliance, privacy (making sure no audio lingers on disk after errors), TOML structure, font rendering bugs. Two AIs catching each other’s blind spots turned out to be a surprisingly effective review process. Vibe-coding gets you to a working prototype fast. The cross-audit is what makes it releasable.

Lessons learned

RegisterHotKey over keyboard libraries. I started with the keyboard Python package for global hotkeys. It worked — until it didn’t. Some antivirus tools flag low-level keyboard hooks, and the library needed admin privileges in certain setups. Switching to the native Win32 RegisterHotKey API solved all of that. It’s a few more lines of ctypes, but it just works.

Keep audio in memory. Early versions wrote a WAV to disk, passed the path to Whisper, then deleted it. Cutting out the disk round-trip by passing the numpy array directly made the whole flow noticeably snappier — and one less file to worry about cleaning up.

Font sizes in Qt stylesheets: use pt, not px. If you set font-size: 13px in a Qt stylesheet, some widgets internally try to read the point size, get -1, and Qt prints a warning. Switching to pt units fixed it silently.

Try it

git clone https://github.com/konradozog/Ratatoskr.git

cd Ratatoskr

python -m venv .venv

.venv\Scripts\activate

pip install .

ratatoskrWindows 10/11, Python 3.10+. That’s all you need.