Mamrot — A Vibe-Coded Audio Transcriber and Cutter

I had a pile of screen-recording narrations that needed to be sliced into individual clips — one per topic. Every tool I found either had no GUI or expected me to type start/end timestamps by hand. Scrubbing a waveform and eyeballing boundaries for dozens of segments is tedious. What I actually wanted was to read what was said, decide where to cut, and let the tool figure out the timestamps.

So I vibe-coded Mamrot (Polish for “mumbling” — because even when you mumble, it still understands): a desktop app that transcribes audio with Whisper, gives you a text editor to split and merge lines, and then exports each segment as a separate audio file.

The three-tab workflow

The whole UI is three tabs — Transcribe, Editor, Cutter — each handling one stage of the pipeline.

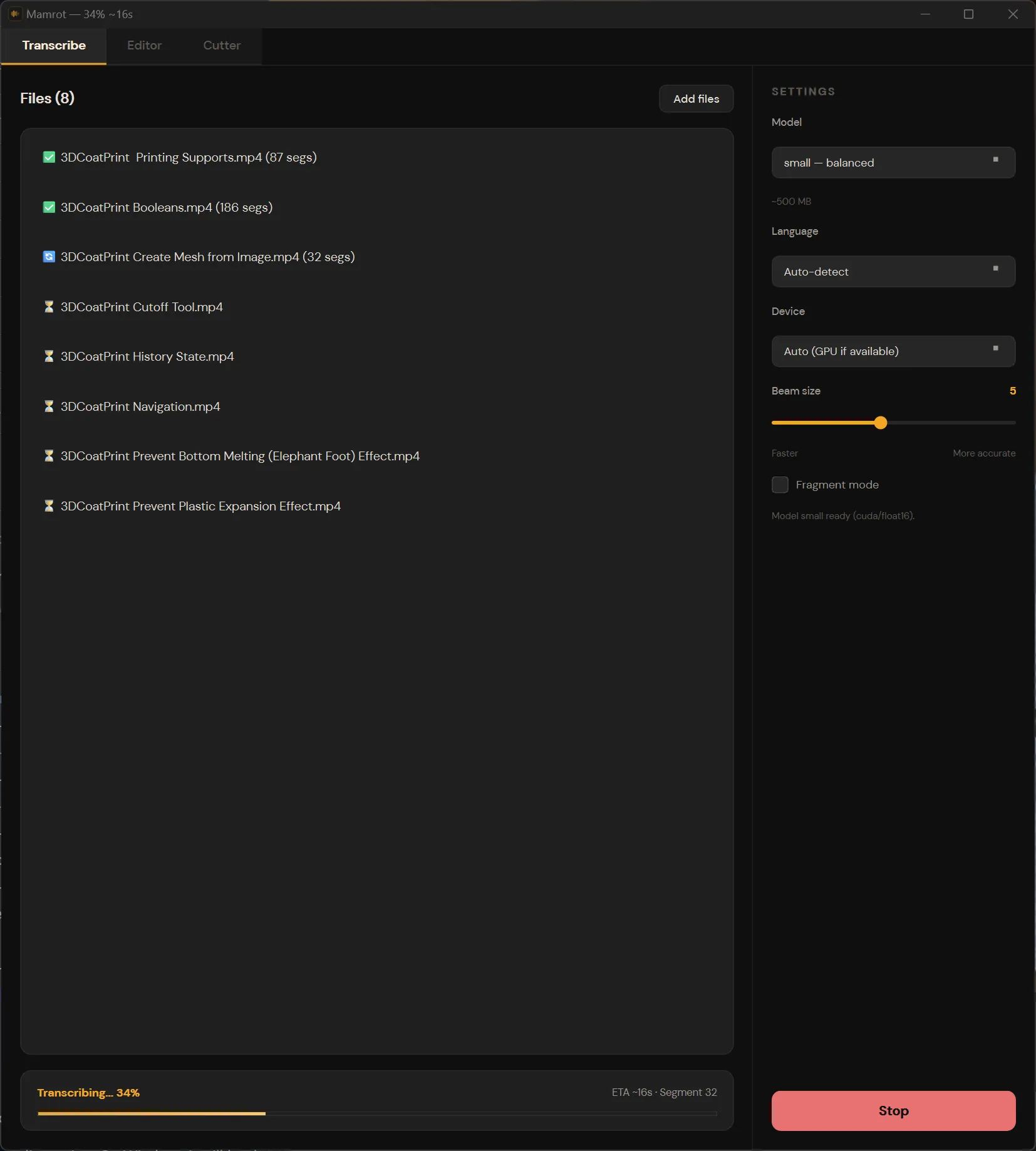

1. Transcribe

Drop files in, pick a Whisper model size (tiny through large-v3), choose a language or let it auto-detect, and hit go. It runs in the background — you can queue a whole folder and walk away. Transcriptions are saved to JSON files alongside the source audio, so you never have to re-transcribe.

Under the hood it’s faster-whisper with word-level timestamps enabled. GPU (CUDA) if available, CPU with int8 quantization otherwise. VAD filtering is on by default to skip silence and improve accuracy.

Supported input: MP3, WAV, FLAC, OGG, M4A, AAC, WMA — plus video formats (MP4, MKV, AVI, MOV, WebM) since FFmpeg extracts the audio track automatically.

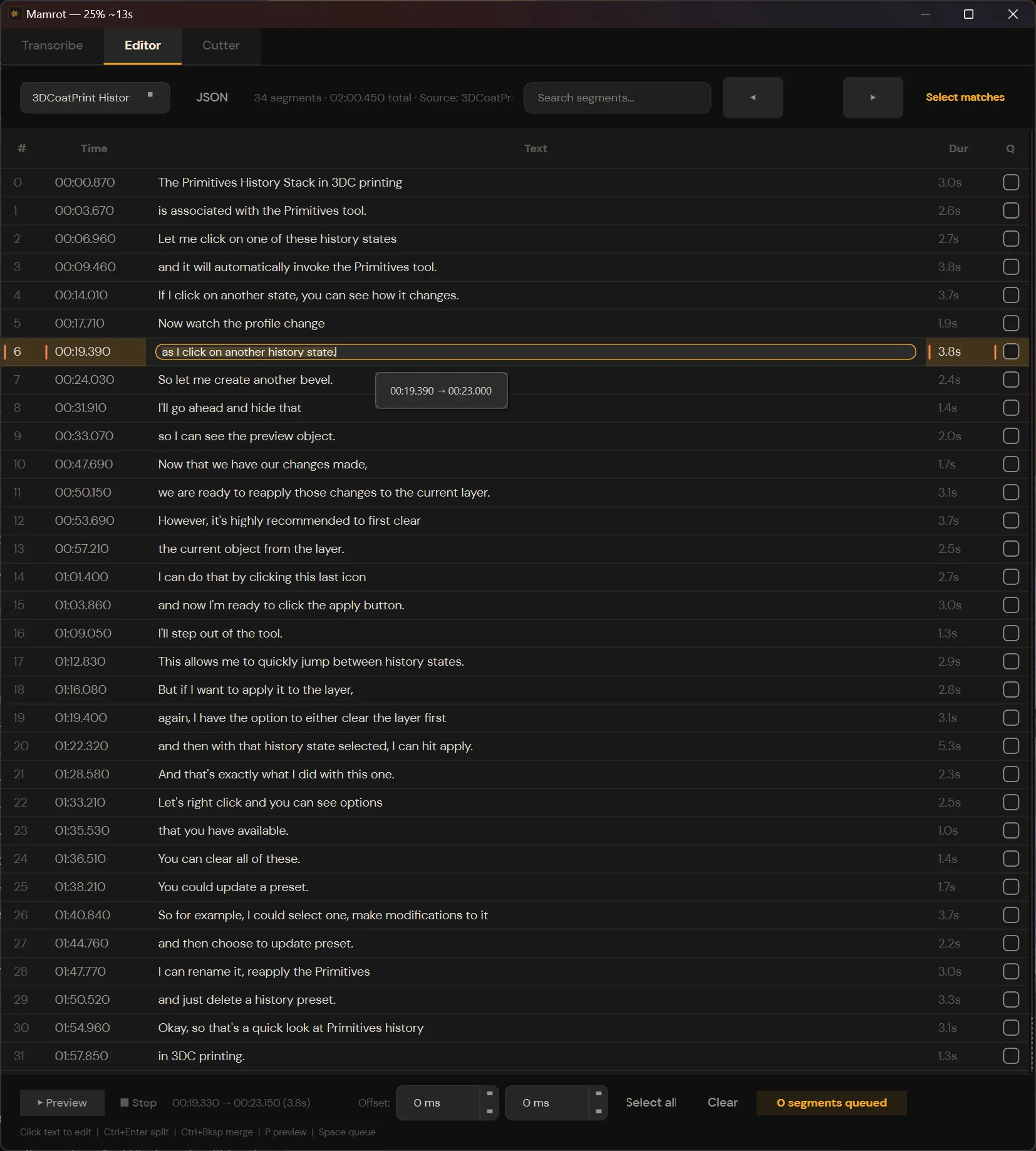

2. Editor

This is where the actual work happens. The transcript loads as a table of timed segments. You can:

- Click any line to edit the text inline.

- Press Ctrl+Enter to split a segment at the cursor — the app snaps to the nearest word boundary and calculates new timestamps automatically.

- Press Ctrl+Delete or Ctrl+Backspace to merge a segment with the one above it.

- Search across the transcript and select all matches at once.

- Queue segments for export by checking them off.

The split/merge behavior is the core feature. Because Whisper gives word-level timestamps, when you split a line the app knows exactly where each word starts and ends. No guessing, no manual timestamp entry. You just read the text, decide “this sentence should be a separate clip,” press Ctrl+Enter, and the timestamps are already correct.

After splitting and merging, the boundaries aren’t always perfect — Whisper’s word timestamps can be slightly off, clipping a syllable or leaving dead air. Each segment has per-segment offset controls so you can nudge the start or end by a few hundred milliseconds before exporting.

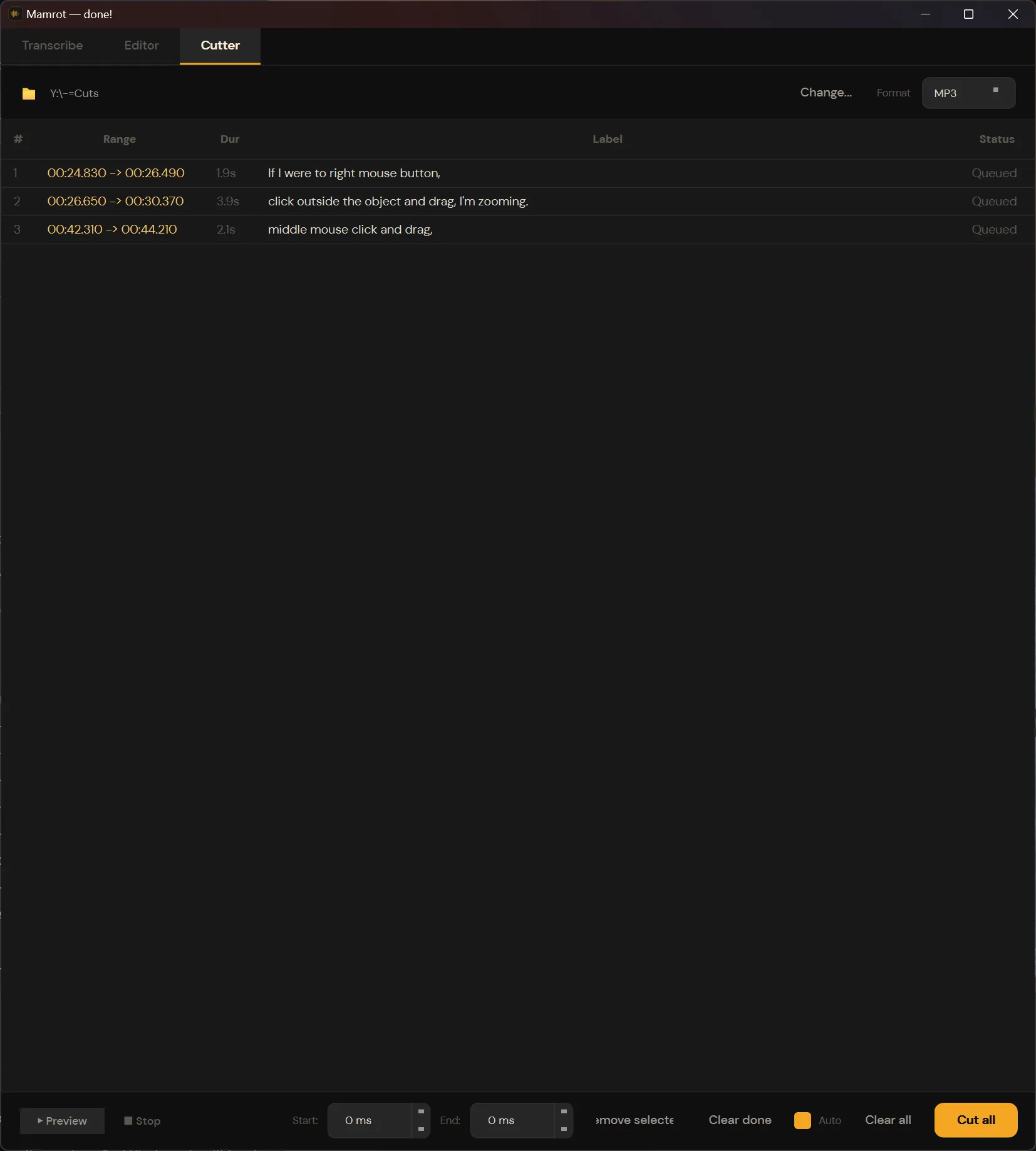

3. Cutter

Once you’ve queued your segments, switch to the Cutter tab. Pick an output format and directory, then hit “Cut all.” Each queued segment becomes a separate audio file.

Output formats: WAV, FLAC, MP3, OGG, AAC (M4A), Opus.

The cutter adds small padding around each cut (60ms before, 150ms after) to compensate for Whisper’s word boundary precision — no clipped syllables. You can also fine-tune per-segment offsets if needed.

Filenames are auto-generated from the transcript text, so you end up with files like if-i-were-to-right-mouse-button.mp3 instead of clip_001.mp3.

Why vibe-code it?

I could have scripted this: parse an SRT, loop through timestamps, call FFmpeg. But the whole point was that I didn’t want to think in timestamps. I wanted to read text, make editorial decisions visually, and let the machine handle the time math.

Vibe-coding with an AI agent made this practical as a weekend project. The UI is PySide6 (Qt6) with a custom dark theme. The transcription engine, the editor logic, the FFmpeg integration — each piece is straightforward on its own, but wiring them into a polished desktop app with keyboard shortcuts, batch processing, and persistent state would have been a week of boilerplate without AI assistance.

Quick architecture

- GUI: PySide6 (Qt6), custom dark theme with orange accents

- Transcription: faster-whisper with word-level timestamps

- Audio processing: FFmpeg (auto-downloaded on Windows)

- Transcript export: SRT, VTT, CSV, JSON (with metadata)

- Threading: background workers for transcription and cutting — UI stays responsive

- Persistence: recent transcripts, window state, and cutter settings saved to

~/.mamrot/

Try it

The full source is on GitHub: Mamrot.

pip install . and run mamrot. Needs Python 3.10+ and FFmpeg (the app will offer to download FFmpeg for you on Windows).

The takeaway: if your workflow involves listening to audio to find cut points, you’re doing it wrong. Transcribe first, read the text, split where it makes sense, export. Let the machine deal with milliseconds — your job is editorial, not clerical.